| Type | Description | Storage Behavior |

|---|---|---|

| Pull | Direct integrations that pull data from vendor API endpoints on an ongoing basis to retrieve logs, alerts, or contextual information | Stored in Radiant-managed storage or customer S3 (BYOB) |

| Type | Description | Storage Behavior |

|---|---|---|

| Pull | API integrations that query for relevant data at the time of triage, retrieving information on-demand | Not stored in Log Management; queried in real-time |

| Type | Description | Storage Behavior |

|---|---|---|

| Push | Standard protocol (UDP/TCP) used by systems and network devices to stream real-time log messages to Radiant | Stored in Radiant-managed storage or customer S3 (BYOB) |

| Type | Description | Storage Behavior |

|---|---|---|

| Push | Vendor-initiated HTTP callbacks that push events to Radiant instantly whenever new activity occurs | Stored in Radiant-managed storage or customer S3 (BYOB) |

| Type | Description | Storage Behavior |

|---|---|---|

| Push/Pull | S3-compatible cloud storage location where customers deposit raw log files that Radiant periodically retrieves and ingests | Stored in Radiant-managed storage or customer S3 (BYOB) |

| Type | Description | Storage Behavior |

|---|---|---|

| Push | Lightweight software agent installed in customer environments to collect and forward raw logs from on-premises systems securely | Stored in Radiant-managed storage or customer S3 (BYOB) |

| Field name | Description |

|---|---|

| rs_timestamp | Standardized timestamp extracted from the original log. |

| rs_received | Timestamp when Radiant received the log. |

| rs_indexed | Timestamp when the log was indexed in Radiant. |

| rs_connectorType | Identifies the source connector that ingested the data (e.g., microsoft_windows_security). |

| Field name | Description |

|---|---|

| rs_sc_host | Radiant Agent host identifier. |

| rs_sc_tag | Radiant Agent tag for log categorization (e.g., winlog.security_events). |

| rs_src_host | Radiant Agent source hostname where the event originated. |

| rs_src_ip | Source IP address where the event originated. |

| Field name | Description |

|---|---|

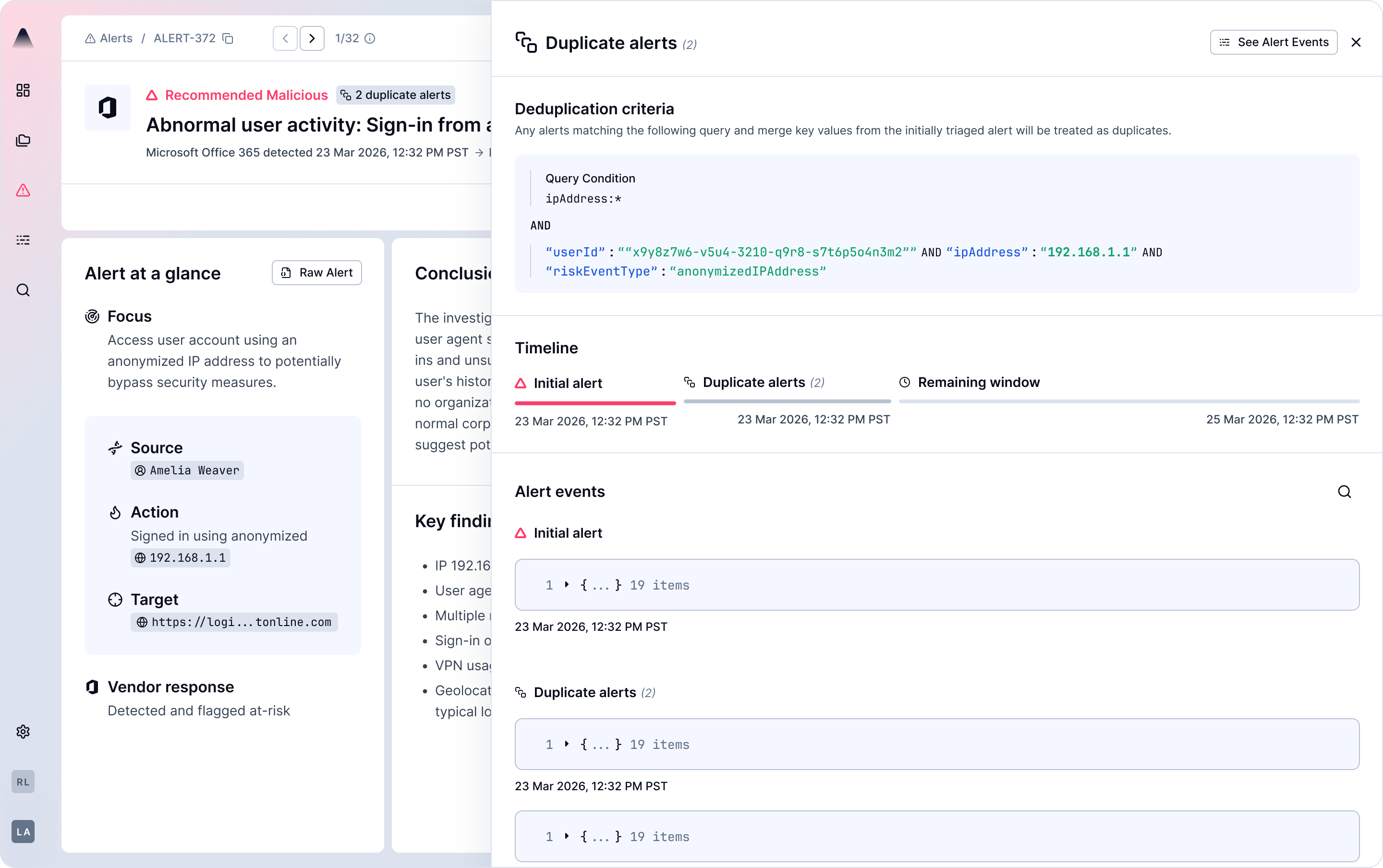

| rs_alertID | Unique identifier for the alert. |

| rs_parentAlertID | Reference to the originally triaged alert when deduplication occurs. |

| rs_filterRule | Identifier for alerts that were suppressed. |

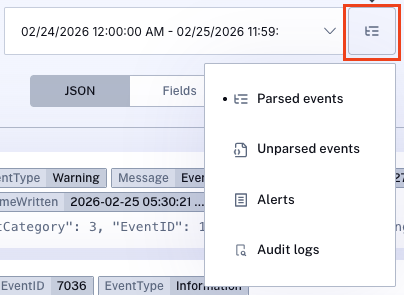

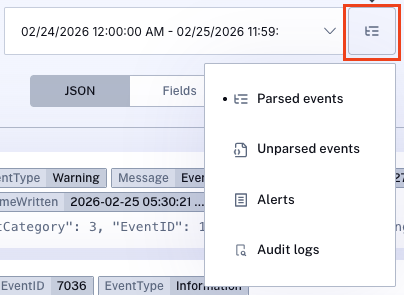

| Index | Contents | Purpose |

|---|---|---|

| Parsed events | All alerts and events successfully processed with Radiant metadata fields appended (e.g., rs_timestamp, rs_connectorType) | Primary queryable log repository; includes filtered alerts for compliance |

| Unparsed events | All alerts and events that generated parsing errors and could not be successfully processed. | Error handling, troubleshooting, and connector configuration issues |

| Alerts | All initial and duplicate alerts (excluding filtered alerts) that passed filtering and are eligible for triage | Active alert investigation and triage workflows |

| Audit Logs | Detailed record of user actions within the Radiant application | Compliance tracking, change management, and internal security monitoring |

| Verdict | Description |

|---|---|

| Recommended Malicious | The AI found sufficient evidence to suggest the alert represents a genuine threat |

| Recommended Benign | The AI found sufficient evidence to conclude the alert is not a genuine threat |

| Likely Benign (Inconclusive) | The AI could not reach a confident conclusion with the available data |

| Malicious | Confirmed malicious verdict (email use case) |

| Benign | Confirmed benign verdict (email use case) |